TL;DR

Everyone has seen the demos. An AI agent that books flights, writes code, files expense reports -- all from a single natural language prompt. These demos are impressive and almost completely misleading about what it takes to build agentic AI systems that work reliably in production.

The Gap Between Demo and Production

Everyone has seen the demos. An AI agent that books flights, writes code, files expense reports -- all from a single natural language prompt. These demos are impressive and almost completely misleading about what it takes to build agentic AI systems that work reliably in production.

Over the past year, our team has built and deployed three production agentic AI systems: a customer support agent that handles tier-1 ticket resolution, an internal knowledge retrieval agent for engineering teams, and a vertical AI agent for automated compliance document processing. Each of these taught us something different about the chasm between "works in a notebook" and "runs in production without waking someone up at 2 AM."

This post is a practical breakdown of the architecture, memory systems, human-in-the-loop patterns, and governance frameworks that make agentic AI work for real.

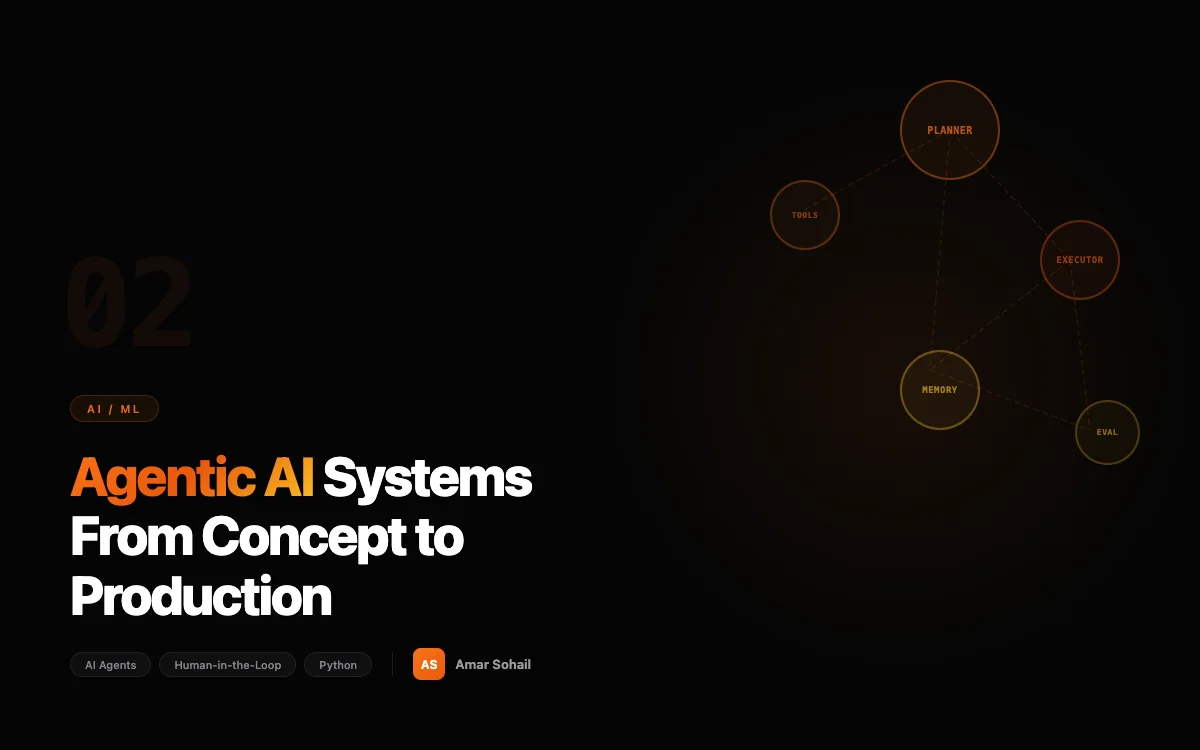

Agent Architecture: Beyond the ReAct Loop

Most agentic AI tutorials start and end with the ReAct (Reasoning + Acting) pattern: the LLM reasons about a task, selects a tool, observes the result, and loops until done. This is a fine starting point, but production agents need considerably more structure.

Our agent architecture has four distinct layers:

The Planning Layer

Before executing anything, the agent decomposes the user's request into a structured plan. This is not just chain-of-thought reasoning -- it is a concrete execution graph with dependencies, estimated costs, and rollback strategies.

from pydantic import BaseModel

from typing import Optional

class AgentStep(BaseModel):

step_id: str

action: str

tool_name: str

parameters: dict

depends_on: list[str] = []

requires_approval: bool = False

estimated_cost_usd: Optional[float] = None

rollback_action: Optional[str] = None

class ExecutionPlan(BaseModel):

plan_id: str

objective: str

steps: list[AgentStep]

total_estimated_cost: float

risk_level: str # "low", "medium", "high"

def requires_human_approval(self) -> bool:

return (

self.risk_level == "high"

or self.total_estimated_cost > 5.0

or any(s.requires_approval for s in self.steps)

)

The requires_human_approval method is critical. Any plan that exceeds a cost threshold, is flagged as high-risk, or contains steps marked for approval gets routed to a human before execution begins. This is not a nice-to-have -- it is a hard requirement from our legal and compliance teams.

The Execution Layer

The execution layer walks the plan graph, calling tools and handling failures. The key design decision here is step-level isolation: each step runs in its own context with its own error handling, and a step failure does not necessarily abort the entire plan.

import asyncio

from enum import Enum

class StepStatus(Enum):

PENDING = "pending"

RUNNING = "running"

COMPLETED = "completed"

FAILED = "failed"

ROLLED_BACK = "rolled_back"

class AgentExecutor:

def __init__(self, tool_registry, memory_store, approval_service):

self.tools = tool_registry

self.memory = memory_store

self.approvals = approval_service

async def execute_plan(self, plan: ExecutionPlan) -> dict:

if plan.requires_human_approval():

approval = await self.approvals.request_approval(plan)

if not approval.granted:

return {"status": "rejected", "reason": approval.reason}

results = {}

for step in self._topological_sort(plan.steps):

# Wait for dependencies

dep_results = {

dep: results[dep] for dep in step.depends_on

}

try:

tool = self.tools.get(step.tool_name)

result = await tool.execute(

step.parameters,

context=dep_results

)

results[step.step_id] = result

await self.memory.record_step(plan.plan_id, step, result)

except Exception as e:

await self._handle_step_failure(plan, step, e, results)

if self._is_critical_step(step):

break

return results

Notice the memory.record_step call. Every action the agent takes is persisted. This is not just for debugging -- it is the foundation of the agent's persistent memory system.

The Tool Layer

Tools are the agent's interface to the outside world. We treat them as a strict API boundary with input validation, output schemas, and rate limiting.

from abc import ABC, abstractmethod

from pydantic import BaseModel

class ToolResult(BaseModel):

success: bool

data: dict

tokens_used: int

latency_ms: float

class BaseTool(ABC):

name: str

description: str

requires_approval: bool = False

max_calls_per_minute: int = 30

@abstractmethod

async def execute(self, parameters: dict, context: dict) -> ToolResult:

pass

@abstractmethod

def validate_parameters(self, parameters: dict) -> bool:

pass

class DatabaseQueryTool(BaseTool):

name = "database_query"

description = "Execute a read-only SQL query against the analytics database"

requires_approval = False

max_calls_per_minute = 10

BLOCKED_KEYWORDS = ["DROP", "DELETE", "UPDATE", "INSERT", "ALTER", "TRUNCATE"]

async def execute(self, parameters: dict, context: dict) -> ToolResult:

query = parameters["query"]

# Hard block on write operations

if any(kw in query.upper() for kw in self.BLOCKED_KEYWORDS):

raise ToolExecutionError(

f"Write operations are not permitted. Query contained blocked keyword."

)

# Execute with statement timeout

result = await self.db.execute(

query,

timeout_seconds=30

)

return ToolResult(

success=True,

data={"rows": result.rows, "row_count": len(result.rows)},

tokens_used=0,

latency_ms=result.latency_ms

)

The BLOCKED_KEYWORDS list is a blunt instrument, and we know it. But blunt instruments are exactly right for safety boundaries in agentic systems. We would rather have a false positive that blocks a legitimate query than allow the agent to accidentally mutate production data. Defense in depth: the database user the agent connects with also has read-only permissions, so even if the keyword filter fails, the database rejects the write.

Persistent Memory: The Differentiator

The difference between a chatbot and an agent is memory. Our agents maintain three types of persistent memory:

Episodic memory records what the agent has done -- every plan, every step, every result. This lets the agent reference past interactions ("Last time you asked about Q3 revenue, the number was $4.2M from the analytics dashboard").

Semantic memory stores distilled knowledge -- facts, preferences, and domain-specific information extracted from past interactions. We store these as embeddings in a vector database (pgvector, not a standalone vector DB -- operational simplicity matters).

Working memory is the agent's scratchpad for the current task. It holds intermediate results, the current plan state, and context from the conversation.

class PersistentMemory:

def __init__(self, db_pool, embedding_model):

self.db = db_pool

self.embedder = embedding_model

async def store_episodic(self, agent_id: str, episode: dict):

embedding = await self.embedder.encode(episode["summary"])

await self.db.execute(

"""

INSERT INTO agent_episodic_memory

(agent_id, episode_data, summary_embedding, created_at, expires_at)

VALUES ($1, $2, $3, NOW(), NOW() + INTERVAL '90 days')

""",

agent_id, episode, embedding

)

async def recall_relevant(

self, agent_id: str, query: str, limit: int = 5

) -> list[dict]:

query_embedding = await self.embedder.encode(query)

rows = await self.db.fetch(

"""

SELECT episode_data,

1 - (summary_embedding <=> $2) AS similarity

FROM agent_episodic_memory

WHERE agent_id = $1

AND expires_at > NOW()

ORDER BY summary_embedding <=> $2

LIMIT $3

""",

agent_id, query_embedding, limit

)

return [dict(r) for r in rows]

The expires_at field is important. We learned the hard way that unbounded memory causes two problems: storage costs grow linearly forever, and stale memories lead to incorrect agent behavior. Our compliance document agent was referencing a regulatory requirement that had been superseded six months prior because we had not implemented memory expiration. Now, episodic memories expire after 90 days, and semantic memories are refreshed through a weekly reconciliation job.

Human-in-the-Loop: Where the Agent Stops and the Human Starts

The most important architectural decision in any agentic AI system is the approval boundary -- the line that separates actions the agent can take autonomously from actions that require human confirmation.

We define this boundary using a policy engine, not hardcoded rules:

class ApprovalPolicy:

"""Determines whether an agent action requires human approval."""

AUTONOMOUS_ACTIONS = {

"read_database", "search_documents", "summarize_text",

"format_report", "send_notification_internal"

}

REQUIRES_APPROVAL = {

"send_email_external", "create_ticket", "modify_config",

"escalate_to_human", "process_refund"

}

def evaluate(self, step: AgentStep, context: dict) -> bool:

# Explicit approval list

if step.tool_name in self.REQUIRES_APPROVAL:

return True

# Cost threshold

if step.estimated_cost_usd and step.estimated_cost_usd > 1.0:

return True

# Confidence threshold from the planning LLM

if context.get("planning_confidence", 1.0) < 0.7:

return True

# Novel action — agent hasn't performed this type of action before

if context.get("is_novel_action", False):

return True

return False

The planning_confidence check deserves attention. When the planning LLM generates a step, we extract its self-reported confidence. If the model is uncertain about what it is doing, we ask a human. This is not a perfect signal -- LLMs are notoriously poor at calibrating their own confidence -- but combined with the other checks, it catches a meaningful number of edge cases.

The is_novel_action flag is even more valuable. If the agent has never performed a particular type of action in production before, it routes to a human for the first few executions. This creates a natural training loop: humans approve or reject, and the agent's behavior boundaries expand based on demonstrated reliability.

AI Governance: The Non-Negotiable Framework

Building agentic AI without a governance framework is irresponsible engineering. Our governance framework covers three dimensions:

Auditability. Every agent action is logged with the full reasoning chain, the plan that generated it, the human approvals (if any), and the outcome. We can reconstruct exactly why the agent took any given action, which is a regulatory requirement for our compliance use case.

Containment. Agents operate within explicit capability boundaries. The customer support agent can read tickets, query the knowledge base, and draft responses. It cannot access billing systems, modify customer records, or communicate externally without approval. These boundaries are enforced at the tool layer, not the prompt layer -- prompt-level restrictions are trivially breakable.

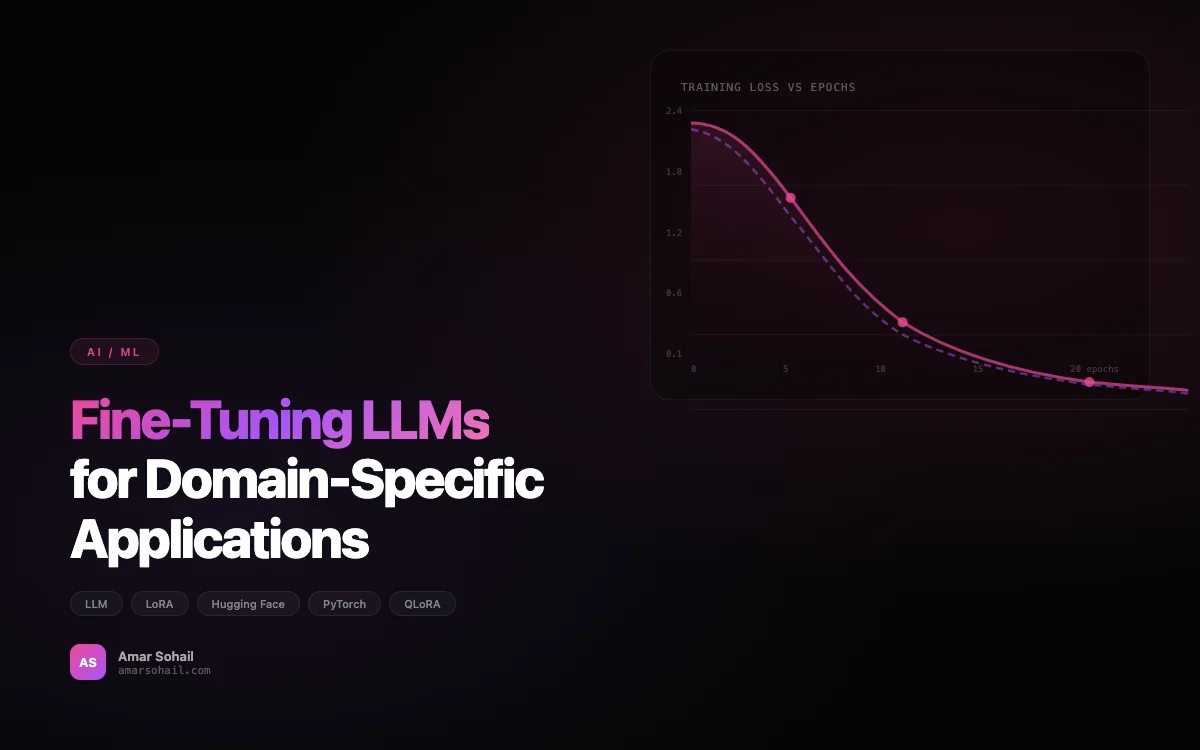

Observability. We track agent-level metrics that go beyond standard application monitoring: task completion rate, human intervention rate, average steps per task, cost per task, and hallucination rate (measured by a separate validation LLM that checks agent outputs against source data).

# Agent metrics we track in production

AGENT_METRICS = {

"task_completion_rate": "Tasks completed without human takeover / total tasks",

"human_escalation_rate": "Tasks escalated to humans / total tasks",

"avg_steps_per_task": "Mean number of tool calls per completed task",

"cost_per_task_usd": "LLM API cost + tool execution cost per task",

"p95_latency_seconds": "95th percentile end-to-end task latency",

"hallucination_rate": "Outputs flagged by validation LLM / total outputs",

"approval_override_rate": "Human-modified agent proposals / approved proposals",

}

The approval_override_rate is the metric I watch most closely. When humans are frequently modifying the agent's proposals rather than approving them as-is, it signals that the agent's planning or tool usage needs retuning. A healthy agent should have an override rate below 15%.

What We Have Learned

Eighteen months of building agentic AI systems has taught me that the technology is the easy part. The hard parts are:

Defining the right autonomy level. Too autonomous and the agent makes costly mistakes. Too restricted and it is just a chatbot with extra steps. The right level is different for every use case and evolves as the system proves reliability.

Managing user expectations. Users who interact with agents develop trust quickly -- sometimes too quickly. We add explicit disclaimers to agent outputs and maintain clear visual indicators in the UI that distinguish agent-generated content from human-generated content.

Handling the long tail of edge cases. Agents work beautifully for the 80% of cases they were designed for. The remaining 20% is where things get interesting, and expensive. Our human escalation path is not a failure mode -- it is a core feature.

If you are building vertical AI agents, start with a narrow, well-defined use case, build the governance framework before you build the agent, and invest heavily in the human-in-the-loop patterns. The technology to build agents exists today. The discipline to deploy them responsibly is what separates production systems from impressive demos.

For the infrastructure underlying these AI platforms, we cover our multi-cloud provisioning strategy in our Terraform best practices post, and the event-driven backbone that connects our agent services is detailed in our Kafka architecture post.